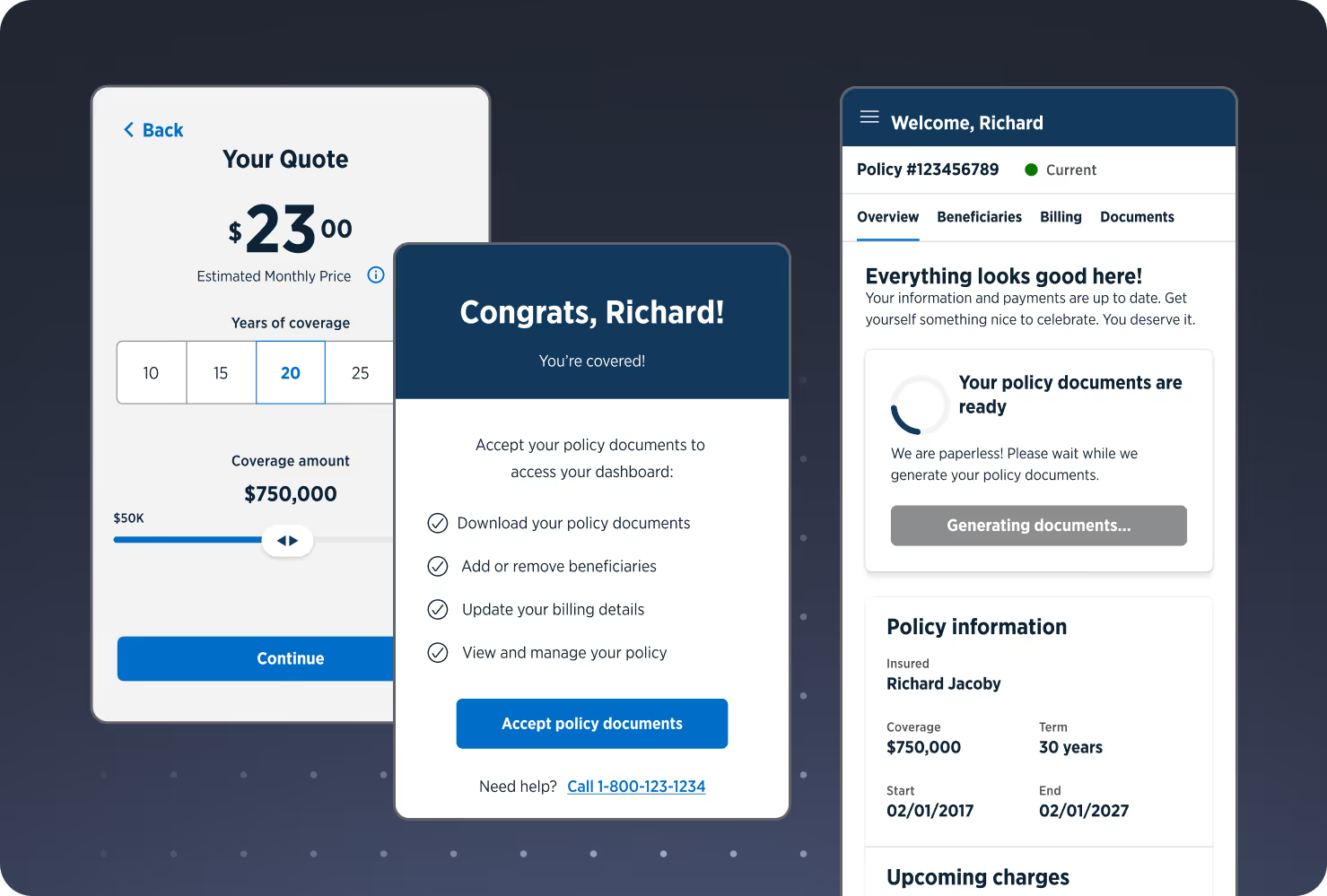

AI and underwriting: helping (not replacing) human judgment

%20Human%20Judgment.jpg)

New AI technologies represent exciting possibilities in insurance, but balancing this new tech with a cautious and strategic approach—one that emphasizes the human element—will be extremely important going forward.

A decision maker’s assistant

In the underwriting space specifically, there’s no shortage of messy, complicated data. While large language models (LLMs) are designed to grapple with huge quantities of data, an easy, initial application of AI-enhanced underwriting automation frameworks is actually to help humans cut through the clutter to find business insights and make better decisions themselves—not to make the decisions for them.

AI and application fraud

Many leaders are also excited about AI’s potential to help prevent and detect fraud in life insurance thanks to its ability to sift through, cross check, and verify large amounts of data. This optimism comes as a recent NerdWallet article shared that 21% of insurance applicants admit to lying on an application.

Beware of bias

Peter Eliason, Bestow’s VP of Data and Analytics, recently co-presented at the AHOU 2024 underwriting conference. Touching on how algorithms, if left unchecked, could potentially worsen bias in life insurance over time, he says, “It’s not set it and forget it. As time goes on, societal, demographic, and other factors can change, so we must be careful to monitor results on an ongoing basis to help keep potential biases at bay.”

This sentiment is echoed by Bryan Simms, co-founder and president of Mammoth Life, who shared his big plans for fighting bias in underwriting with Insurance Business Magazine. You can read more of his thoughts here.

Exercising cautious optimism

This tech is here to stay, but it will take time to evolve use cases to ensure the accuracy required for life insurance risk assessment, where we are looking for low-frequency, high-severity events.

As carriers pour more and more resources into developing LLMs and generative AI models in search of maximum efficiency, it’s important not to lose sight of the big picture. The life insurance industry is about people, and these new tools should be viewed as just that: tools to help people work faster and smarter.

Conclusion

AI in life insurance underwriting FAQs

How can AI assist decision-making in life insurance underwriting?

AI technologies, especially large language models (LLMs), can help underwriters process vast amounts of data efficiently. Instead of making decisions automatically, these tools highlight insights, patterns, and anomalies that enable humans to make more informed choices. By reducing manual effort, AI allows underwriters to focus on nuanced assessments that require their vast experience and professional judgment.

What role does AI play in preventing insurance application fraud?

AI can cross-check and verify large datasets to detect inconsistencies or false statements in insurance applications. This proactive use of AI has shown the potential to enhance accuracy and reduce risk for carriers while maintaining compliance standards.

Why is bias a concern when using AI in underwriting?

Algorithms can unintentionally perpetuate existing societal or demographic biases if not carefully monitored. Over time, changes in data, regulations, or population trends can amplify these biases, potentially creating some unfair outcomes. As this technology matures, the emphasis must be on continuous oversight to ensure AI supports equitable and responsible underwriting decisions.

How should life insurers approach AI adoption?

Insurers should take a cautious, strategic approach, prioritizing accuracy and compliance. Implementing AI incrementally, monitoring results, and keeping humans in the loop ensures that tools support underwriters without introducing unintended risks. This balance allows carriers to benefit from efficiency gains while maintaining trust and ethical standards.

From the blog

The latest industry news, interviews, technologies, and resources.

.svg)